Most AI systems today are built like vending machines.

You insert a prompt.

You receive a response.

The transaction ends.

For experimentation, that model works. For classrooms, research teams, organizations, and civic groups, it does not.

Over the past year, Topoi has been evolving from a writing-focused AI assistant into something more ambitious: an API-first infrastructure layer for structured, accountable, collaborative AI.

This is not an announcement of general availability. Topoi is still under active development, and substantial work remains ahead. But the architectural direction is now clear—and that direction is what makes the project worth describing.

From Tool to Architecture

What began as a Socratic writing companion gradually revealed a deeper problem. The challenge was not generating responses. The challenge was managing context.

Who is participating?

Under what authority?

With which defaults?

At whose cost?

Within what boundaries?

As these questions accumulated, it became obvious that AI applications intended for institutional use require more than a chat interface. They require structure.

Topoi’s recent design advances have focused on building that structure.

Every interaction now lives inside a session.

Sessions can contain multiple participants.

Participants operate within defined roles.

Prompts are layered rather than overwritten.

Usage and cost are ledgered rather than obscured.

Model providers are abstracted rather than hardwired.

This is infrastructure work. And infrastructure takes time.

Process Embedded in Code

My background as a writing teacher shapes this design more than any technical trend.

In teaching composition, I have always argued that process matters more than product. A finished essay detached from its drafting history is pedagogically hollow.

AI systems face the same risk. A polished answer produced without structure, attribution, or accountability may be impressive—but it is fragile.

Topoi’s architecture embeds process into the system itself:

- Prompts can be reviewed before activation.

- Interventions can be injected mid-session.

- Roles define who may alter system behavior.

- Organizational defaults can guide usage.

- Costs are visible and attributable.

None of this makes the model “smarter.”

It makes the environment more responsible.

Safety and Governance by Design

Much of the public conversation about AI safety focuses on output moderation. Topoi approaches safety earlier in the lifecycle.

System prompts can be evaluated heuristically. They can be reviewed by a dedicated prompt-review agent. Future development will expand into transcript-level monitoring and structured intervention.

But more importantly, safety is tied to governance. Authority is defined. Participation is structured. Oversight is possible.

AI does not operate in a vacuum. It operates within roles.

This is a slower path than shipping a chatbot. It is also, I believe, the more durable one.

Accountability as a First-Class Principle

Another area of significant design progress has been usage tracking and cost transparency.

Tokens are not abstract. Audio minutes are not free. Providers charge. Organizations need visibility.

Topoi includes ledger-based usage accounting at the session and organizational level. API keys are attributable. Model selection can be abstracted and managed centrally.

These features are still being refined. But their presence reflects a deliberate choice: AI systems must be economically legible if they are to be responsibly deployed.

A Platform in Development

It is important to be clear: Topoi is not yet a general-purpose platform ready for broad third-party integration. Several months of development remain before the system reaches a stage suitable for public developer onboarding.

What exists today is a solid architectural foundation:

- API-first design

- Role-aware sessions

- Layered prompt management

- Usage and cost ledger infrastructure

- Provider abstraction

- Streaming and voice endpoints under active development

The coming phase will focus on hardening, documentation, developer tooling, and expanded orchestration capabilities.

This is not a sprint to feature parity with existing chat platforms. It is a deliberate effort to build durable AI infrastructure.

The Larger Vision

There is a strong cultural pull toward increasingly autonomous AI systems—systems that operate efficiently and invisibly.

But invisibility can erode accountability.

We do not need to fear AI becoming human.

We need to fear humans becoming absent.

Topoi is an attempt to design against that absence.

It is not an attempt to build the most independent AI.

It is an attempt to build AI that remains embedded within human institutions, roles, and responsibilities.

The models will improve rapidly over the next year. That is almost certain.

What matters just as much is the architecture into which those models are integrated.

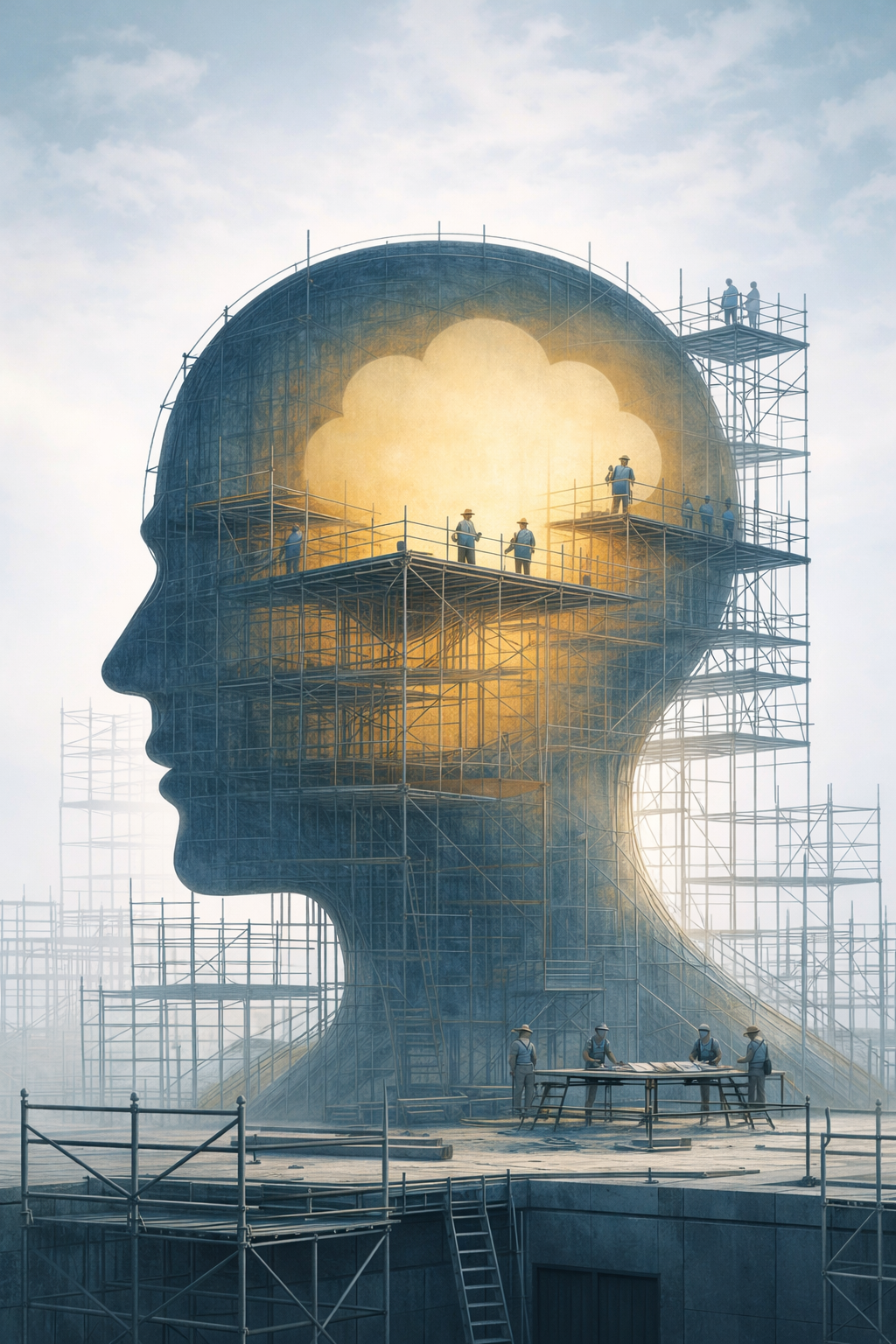

Topoi is still under construction. But its direction is now defined: to provide the structural layer that collaborative, accountable AI systems require.

The work continues.